Montclair Sports

Background

Most golf apps track scores. Few help players improve systematically.

Deuce GC is a comprehensive golf application focused on planning, structured practice, and performance insight. It connects course management, practice tracking, equipment decisions, and handicap analytics into a single feedback loop.

I built Deuce, for Montclair Sports Unlimited, as an AI-native product experiment: designing the system architecture, interaction model, and behavioral framework, while leveraging AI to accelerate engineering, code generation, debugging, and iteration. The goal was not just to ship features, but to explore how a senior product designer can use AI as a force multiplier across the full product lifecycle.

Why Deuce mattered

Golfers often practice without structure. They log rounds but don’t translate data into improvement.

This creates predictable failure modes:

Practice sessions disconnected from on-course weaknesses

Overemphasis on scores rather than skill deltas

Equipment decisions made without performance context

Analytics that inform but don’t guide action

Deuce reframed the problem from “round tracking” to “behavioral system design.” The objective was to create a closed-loop model:

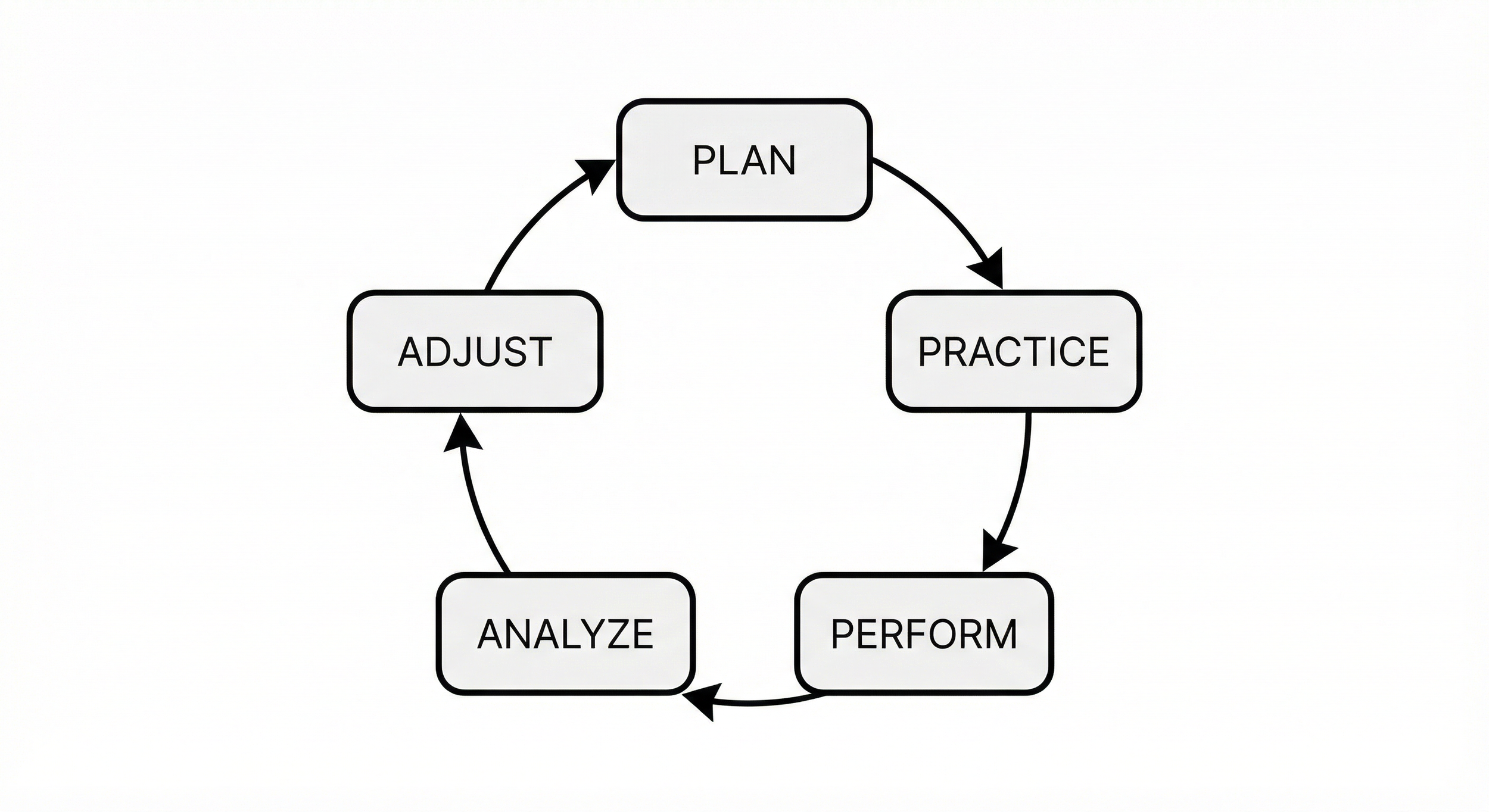

Plan → Practice → Perform → Analyze → Adjust

This system-level framing shaped every product decision.

My Role

I owned the product end-to-end as a Senior product designer, leveraging AI.

Defined the product strategy and behavioral model

Designed the information architecture and interaction system

Structured the data model and improvement loop logic

Used AI to generate, refactor, and validate production code

Implemented frontend and backend architecture

Set up analytics, observability, and CI/CD

Shipped and deployed independently

This was not a prototype exercise. The application is built with Figma, Cursor, Vercel, Supabase, and GitHub.

Problem

Golf performance data is fragmented.

Players manage:

Course notes in one place

Practice drills in another

Handicap tracking separately

Equipment decisions informally

No existing product integrated these into a structured improvement system. The problem was not a lack of data. It was a lack of integration and behavioral clarity.

The challenge was designing a system that:

Encourages intentional practice

Connects drills to real performance gaps

Translates analytics into specific next actions

Scales technically without overengineering

Initial Concept Exploration

Research + Ideation

I began by framing Deuce as a behavioral systems problem rather than a feature set. Through 50+ casual on-course interviews, contextual inquiry, competitive analysis, and heuristic reviews, I identified a structural gap: golfers track rounds but rarely operate within a disciplined improvement loop.

I synthesized findings through sketching and whiteboarding before touching UI, focusing on system coherence over screens:

Mapped the improvement cycle (Plan → Practice → Perform → Analyze → Adjust)

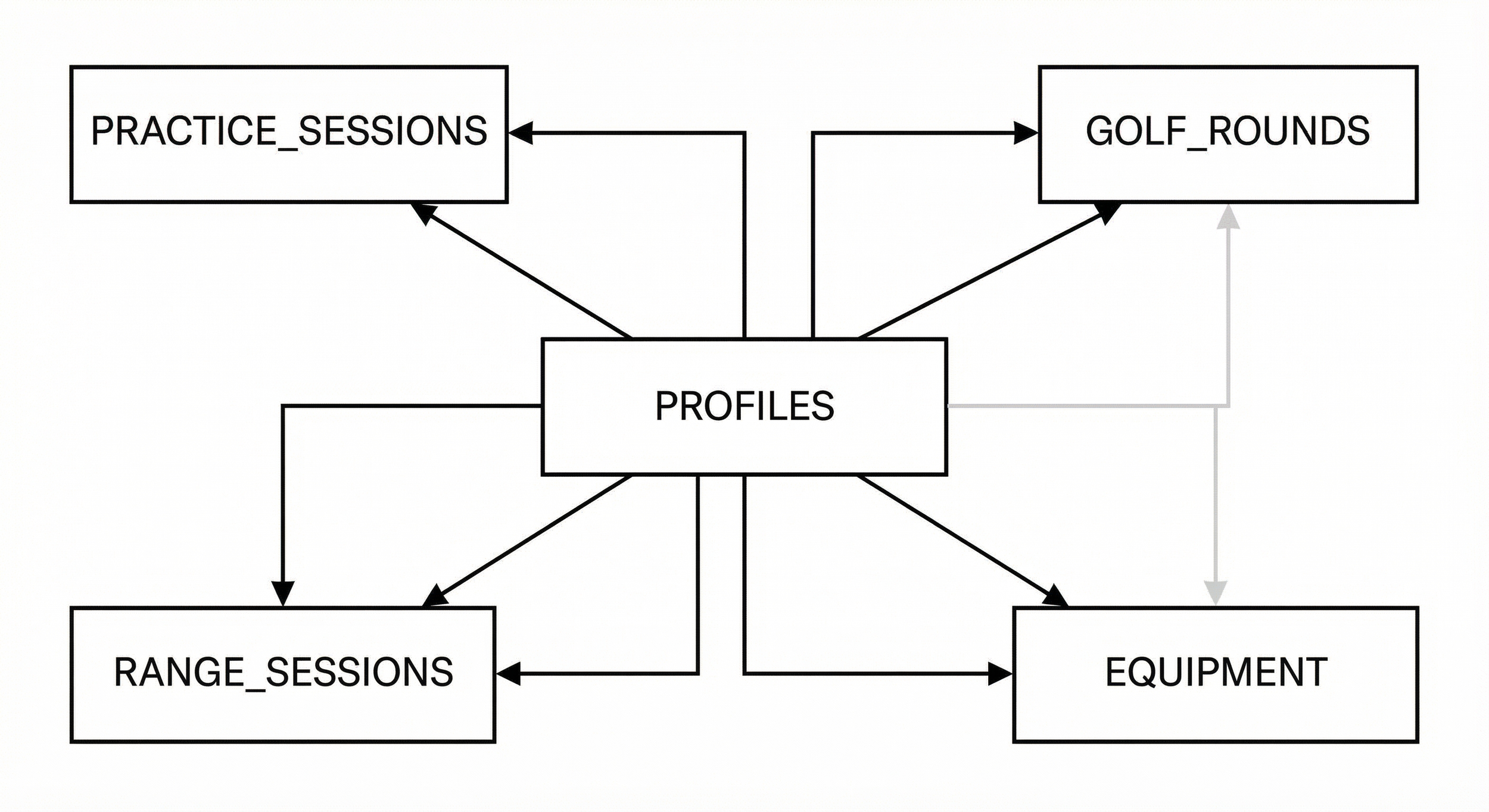

Defined core data objects: rounds, sessions, skills, equipment

Identified where analytics should inform action, not just report metrics

Clarified what needed to be a global state vs. contextual interaction

This ensured the product model was stable before visual exploration began.

AI-Assisted Prototyping

With the system defined, I moved into Figma and Figma Make to accelerate exploration. I generated and iterated on ~30 prototype variations through structured prompt synthesis, using AI to expand the solution space quickly.

Tested navigation hierarchies and card structures

Explored multiple analytics visualizations

Refined practice logging flows

Evaluated edge states and empty states

AI compressed iteration cycles, but all structural decisions remained deliberate and human-led.

Transition to Development

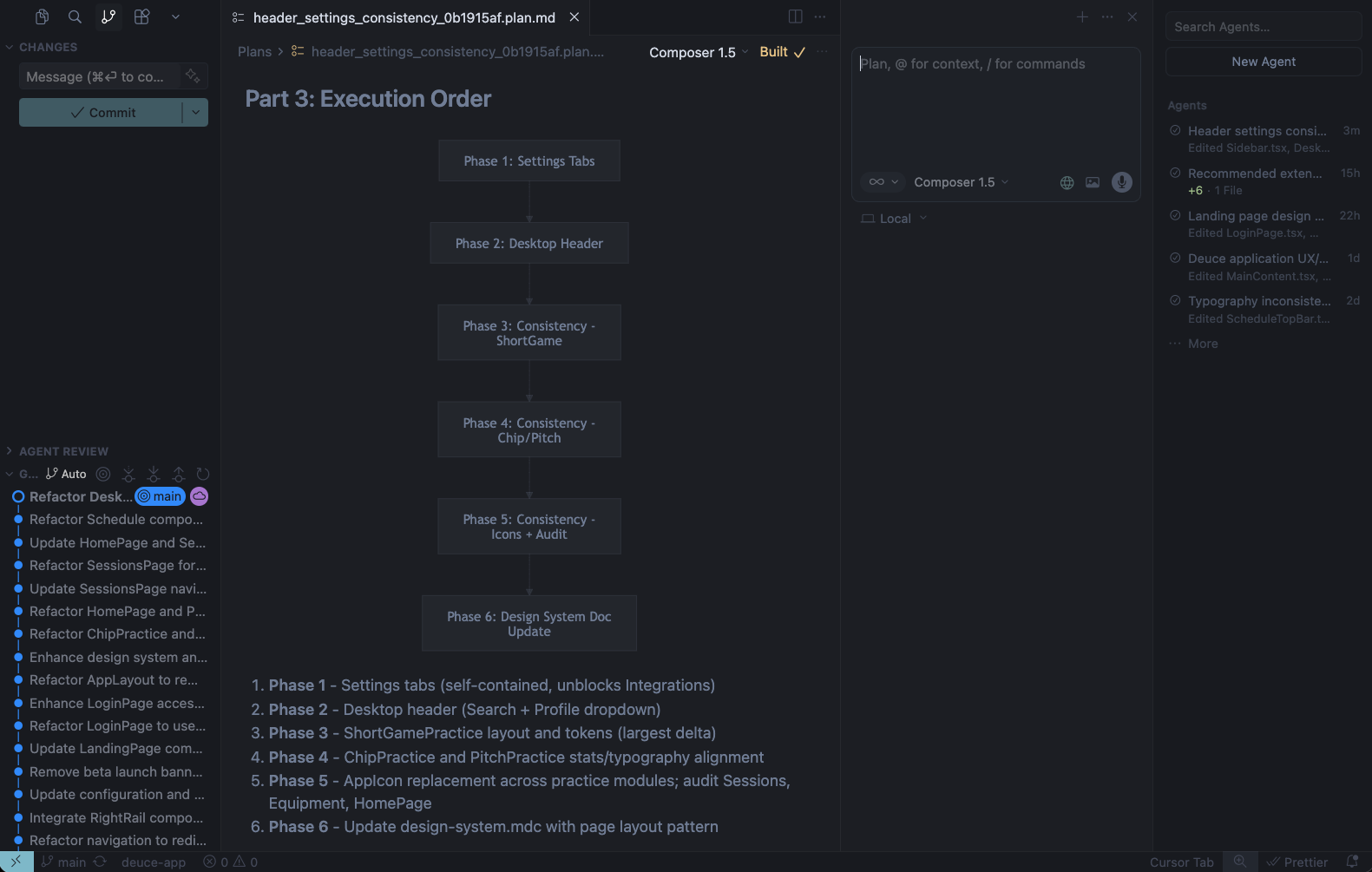

Once direction stabilized, I moved into production using Cursor and an AI-augmented workflow. The prototype concepts evolved into a structured Next.js + TypeScript architecture with Supabase, React Query, and Zustand deuce-app-summary.

Refactored the generated code into modular components

Structured server vs. client state separation

Established analytics and observability instrumentation

Used AI for debugging, refactoring, and architectural tradeoffs

This phase bridged design intent and implementation without introducing architectural drift.

Reflection on this phase

This concept exploration phase reinforced a principle in my practice: AI increases velocity, but clarity of system thinking determines quality. Research grounded the problem, whiteboarding stabilized the model, and AI expanded execution bandwidth. The leverage came not from automation, but from maintaining authorship of the system while accelerating its realization.

Constraints & Context

Solo execution: No formal engineering team. AI was leveraged as a collaborative coding partner.

System coherence: Course planning, practice sessions, analytics, and equipment needed a unified data model.

Performance sensitivity: Golf data requires recipient-level granularity (round, hole, shot, session).

Speed vs. architecture: Build fast, but avoid technical debt that could compromise the integrity of analytics.

Observability: The system needed instrumentation from day one (PostHog + Sentry)

Design Approach

Behavioral System First

Before building UI, I defined the improvement model:

Planning informs practice

Practice generates skill metrics

Rounds validate or falsify the training focus

Analytics guide the next cycle

This prevented feature sprawl and kept the product aligned around improvement rather than logging.

Data Model Integrity

Rather than treating scorecards as primary, I structured the system around:

Practice session objects

Skill categories

Equipment associations

Performance projections

Handicap became a trailing indicator, not the core value proposition

AI-Augmented Development

AI was used to:

Generate scaffolding for Next.js + TypeScript architecture

Refactor component logic

Validate Supabase data flows

Debug async state issues (React Query vs. local state separation)

Accelerate UI implementation using Tailwind + ShadCN, then migrated to MUI

Crucially, I did not delegate judgment to AI. I used it to accelerate execution while maintaining architectural ownership.

Technical Challenges

1. Geospatial and GPS integration

Range practice needed real-time distance from the user to targets. I integrated the browser Geolocation API with Turf.js for yardage arcs and Haversine distance calculations. Challenges included handling permission flows, enabling high-accuracy mode, and adjusting for elevation/wind when calculating “plays like” distance. The solution used a custom useGeolocation hook with clear separation between tracking state and UI.

2. Unified data model with recipient-level granularity

Golf data had to support round, hole, shot, and session levels. I designed a schema where practice_sessions (putting, chipping, pitching) and range_sessions share a consistent structure, with RLS enforcing user isolation. Handicap and analytics are derived from these sources rather than stored separately, keeping the model coherent and avoiding drift.

3. Server vs. local state separation

Avoiding race conditions between React Query (server state) and local UI state was critical. I used React Query for session fetches, caching, and invalidation, and kept transient UI (e.g. active set, shot logging) in local state. This separation reduced bugs when saving sessions and refetching history.

4. Session persistence and complex UI state

The Range Session Tracker manages sets, shots, GPS state, and miss-direction flows. Persisting this to Supabase without blocking the UI required careful sequencing: validate → save → invalidate queries → close. I used optimistic patterns where appropriate and explicit loading/error states for destructive actions.

5. Analytics at scale without overengineering

The Analytics Hub aggregates sessions across categories and time ranges. Rather than building a separate analytics backend, I used filtered client fetches and in-memory aggregation. For export, I used CSV generation (PapaParse) to keep the implementation simple while supporting user needs.

6. Solo execution with AI

Without a dedicated engineering team, I used AI for scaffolding, refactoring, and debugging. The main challenge was maintaining architectural ownership: I defined the data model, state boundaries, and component structure; AI accelerated implementation. Code review and targeted testing (e.g. RLS, auth flows) stayed under my control.

Codebase-Specific Details

Concrete and verifiable:

range-map-utils.ts – Turf.js for yardage arcs, elevation/wind adjustments.

RangeSessionTracker – ~900 lines, GPS + sets + shots + Supabase persistence.

CoursePlanner – ~890 lines, hole-level planning with geolocation.

AnalyticsHub – Recharts, time ranges, CSV export.

practiceSessions.ts – Centralized CRUD with RLS.

APP_REFERENCE.md – Single source of truth for product and design.

System Design Decisions

Key choices included:

React Query for server state; Zustand for local UI state separation

Centralized practice analytics to avoid per-feature logic duplication

Modular UI components aligned to a scalable design system

CI/CD automation via GitHub Actions + Vercel for rapid iteration

Solution

Deuce shipped as a structured golf performance platform with:

Interactive course management and shot planning

Practice session tracking with analytics

Equipment management

Handicap analysis with projections

Skill-development games

The product integrates these into a unified workflow rather than standalone features.

Outcome

Shipped and deployed.

Validated:

AI can meaningfully compress build cycles for experienced designers.

A Senior designer can operate across strategy, design, and engineering layers when architecture is thoughtfully scoped.

Behavioral system framing reduces feature creep and clarifies product identity.

Deuce serves as a working proof of concept for AI-accelerated product development—demonstrating that design leadership increasingly includes systems architecture and technical execution.

Reflection

This project reinforced a core belief:

AI does not replace product judgment. It amplifies it.

The real leverage was not code generation. It was about defining the right system, clearly structuring constraints, and using AI to move faster without compromising architectural integrity.

At the Senior level, the differentiator is not simply shipping UI—it is framing the problem correctly, designing coherent systems, and using modern tools responsibly to increase velocity while preserving product quality.